Cloud vs. AI: Same Stack, Different Game

Why the Cloud Playbook May Not Work for the AI Revolution

Cloud and SaaS have shaped the most recent technology shift in the last 10-15 years. AI is now reshaping the entire industry landscape. SaaS turned software into a subscription, and now AI is delivered as a service (AIaaS). During technology shifts, people often tend to find patterns and rhymes from the past technology cycles and apply the learnings onto the upcoming cycle.

From that perspective, it is natural to apply technology frameworks from the Cloud/SaaS evolution to AI as a rinse and repeat model. AI (agents, tools) can be viewed as a workload / application layer running in the cloud, so many of the constructs are very much applicable (e.g. AIOps, Orchestration frameworks, tools etc.). But there are some fundamental differences in unit economics, dependencies, and dynamics that make it different from Cloud/SaaS and present some unique challenges.

Let us analyze the comparison between Cloud/SaaS and AI further.

Cloud/SaaS at a glance (2006-present)

The core value proposition of cloud was to provide access to scalable infra and software via the internet through subscription based services. The end user value being efficiency, cost savings (reduced CapEx and OpEx) and flexibility. It took about 10-15 years for mainstream adoption.

Technology enablers

The key technology enablers have been

Virtualization (VMWare, Xen) enabled multi-tenancy

RESTful APIs enabled composable architectures

DevOps and CI/CD enabled rapid deployment and iteration

Containers and Kubernetes enabled workload portability and scale

Open source enabled commoditization of infra (Linux, Kubernetes) and uniformity (by and large) of the tech stack

Broadband connectivity enabled access and mobile enabled new use cases

Economics

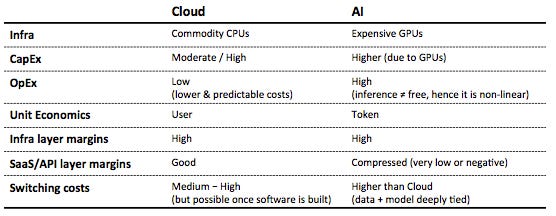

The economics of Cloud is tied to the fundamental nature of CPUs and the workloads running on them. CPUs are commoditized, enable efficient multitasking, allowing multiple apps to run simultaneously. This capability maximizes infrastructure utilization, enabling incremental scaling as application demands grow.

Infrastructure layer: Cloud infrastructure is CapEx heavy, but once scaled marginal OpEx is lower once it is run at scale, and the costs are more predictable. So, when it is run at scale (IaaS), it can be a positive high margin business for the cloud providers, as seen in the case of AWS, Azure etc.

Workload/Ecosystem layer: IaaS enabled adding PaaS and SaaS services on top of it, thereby enabling running diverse workloads. The predictable costs and low marginal OpEx enabled positive economics for this layer. In addition to cloud being a positive ROI business for the cloud providers, it enabled network effects for the larger ecosystem of developers and enterprises in the platform and application layers as well - it was not just AWS that profited, but Snowflake, Databricks, Zoom, etc. all had room to grow

Pricing model: The lower/manageable OpEx enabled cloud providers to offer usage-based pricing and enable a window for adoption by the ecosystem.

Switching costs: Switching is hard due to infra lock-in (e.g. S3, IAM etc. provider-tied services), but once the software is built, its portable due to common services across providers (e.g. Kubernetes, DBaaS etc.)

AI (LLMs/GenAI) at a glance (2018-present)

The core value proposition is to automate decision-making and cognition through access to hosted models (cloud/on-prem). The end user value being productivity, creativity and automation. It is still early in its lifecycle, but evolving rapidly.

Technology enablers

The key technology enablers are

Transformer based architecture has been foundational to development of LLMs

GPU (Nvidia) based acceleration of training and inference at scale

Large scale training datasets enabled the creation of large models with generalization capabilities

Extensions of LLMs such as LRMs, RLHF etc. that have enabled reasoning

Economics

The economics of AI is tied to the fundamental nature of GPUs and the workloads running on them. GPUs are optimized for parallel processing, making them ideal for AI inference tasks that require handling large datasets concurrently. While GPUs can process multiple inference jobs in parallel if properly managed, the high upfront cost and the need for specialized infrastructure can make scaling seem more expensive. Unlike CPUs, GPUs require careful workload distribution to achieve optimal utilization, and their cost can introduce a different scaling dynamic compared to traditional CPU-based systems.

Infrastructure layer: AI infrastructure is CapEx heavy (GPUs are significantly more expensive than CPUs and more power hungry). However, AI inference needs GPUs too (every token costs FLOPs), so the marginal cost of inference is high (compared to running a normal cloud workload) and hence the OpEx is quite high too. I have written about this in the context of economics of hosting private models in my earlier article.

Workload/Ecosystem layer: The workload layer runs similar to how it runs in typical cloud, i.e. AI can be treated as a workload. So DevOps can become AIOps, REST can be translated to token APIs etc.

However, unlike cloud where OpEx is low(er) and predictable, AI inference is not free (its not host and forget) and changes the economics of this layer significantly. AI inference can be 80–90% of the total lifecycle cost, and is rising as models and usage scale. This is a key difference from cloud, where marginal costs decrease at scale.

Hence, the ecosystem capture is not similar to cloud and may not be positive-sum for everyone in the ecosystem. I have done more detailed analysis on economics of AI agents in my earlier article.

In addition, the evolving data landscape (with high data costs and data lockdowns as described in my article) has impact on the economics for the larger ecosystem as well. This can make it expensive for enterprises developing AI agents.

This creates a centralizing force in AI around Nvidia, AWS, OpenAI, etc., which didn’t happen in cloud in the same way

Pricing model: While AI offers usage-based pricing too, the fundamental economics have evolved from token-based pricing i.e. tied to model effort, not just time or access. I have done more detailed analysis on token economics in my earlier article.

Switching costs: AI lock-in resembles the iOS App Store lock-in.

data + model are deeply tied to each other. Plus fine-tuning, embeddings, adapters etc. add more stickiness than cloud ever did (plus cloud related lock-ins continue to exist for non-AI part of the application).

While Model Context Protocol (MCP) has been conceived as an intermediary layer, it is still unproven, and comes with its own baggage (such as security implications discussed in my article securing AI agents in the wild).

In addition, a complete application would still need other Cloud/SaaS services such as API gateways and servers, loadbalancers, databases and data lakes, network + security + data fabrics, CI/CD and DevOps, orchestrators, monitoring and analytics etc. This would not only add to costs of running the applications, but also create new cloud vendor lock-in scenarios, thus make switching costs higher.

Summary of cloud vs AI economics comparison

Inference isn’t free — every token is a billable event

Ecosystem Implications

All these dynamics have larger implications on the overall ecosystem.

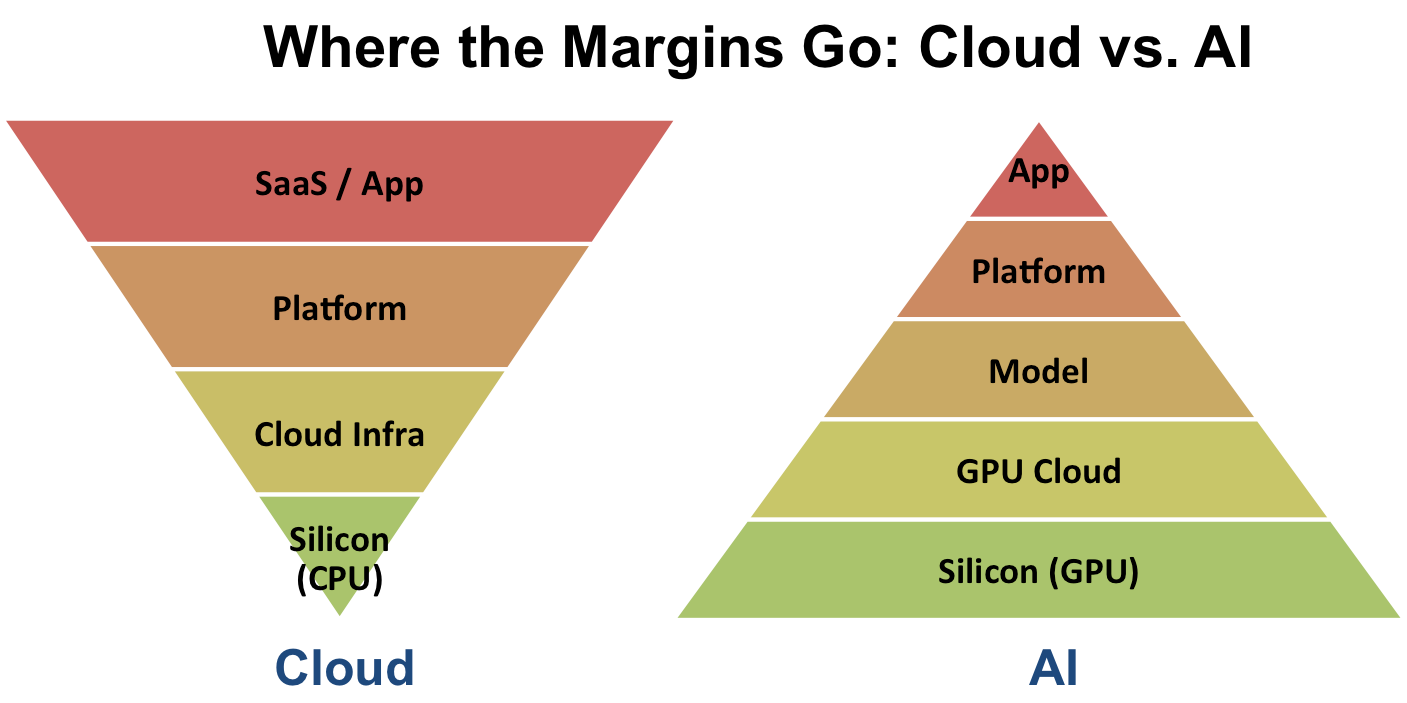

Silicon/Hardware Layer: In the case of Cloud/SaaS, Intel and AMD dominated this layer. In the case of AI, Nvidia, AMD, custom chips (TPUs) are the prominent players. Nvidia dominates this layer (from GPUs to CUDA). It is very capital intensive for new entrants to emerge.

As of now, lions share of AI profits are held at this layer. This layer is also very power hungry, which influences choices of building datacenters, cooling etc. The economics of rest of the layers depend on how the prices in this layer normalize.

Infra Layer: In the case of Cloud/SaaS, CSPs such as AWS, Azure, GCP dominated due to the deep pockets in making capital investment and building software layers. The same players will continue to have a huge presence in the AI era as well.

In addition, AI models will be part of the Infra layer, and will now have Foundational Model companies such as OpenAI, Anthropic etc. who will both compete and collaborate with the traditional CSPs. There is a new emerging segment called neo-cloud or GPU-cloud with companies such as Crusoe Cloud, Coreweave, Lambda Labs, Together.ai etc.

Vertical integration seems to be the name of the game here - the providers want to offer all the way from GPU racks to orchestration, tools and even agents.

Platform Layer: In the case of Cloud/SaaS, this was dominated by companies such as Snowflake, Databricks and many others. In the case of AI, this layer will have LangChain and companies building Vector databases.

Who will dominate this layer is still emerging, but since the platform layer is essential for applications to run, this layer offers good opportunities for profitable companies to exist.

App Layer: In the case of Cloud/SaaS, this had a variety of companies from Salesforce, Zoom, Shopify across all different market verticals. In the case of AI, this will have companies such as Perplexity, Notion AI, Jasper, and everyone building AI agents for marketing, e-commerce and every possible segment.

This layer is very fragmented and the economics of this layer is unclear. Companies such as Perplexity are growing really fast, but can they make a profitable business out of it is still to be seen. The companies termed OpenAI Wrappers will have a hard time to compete since the foundational model companies such as OpenAI themselves want to build it all. So the economics of this layer will heavily depend on if infra prices normalize and allow them to be profitable.

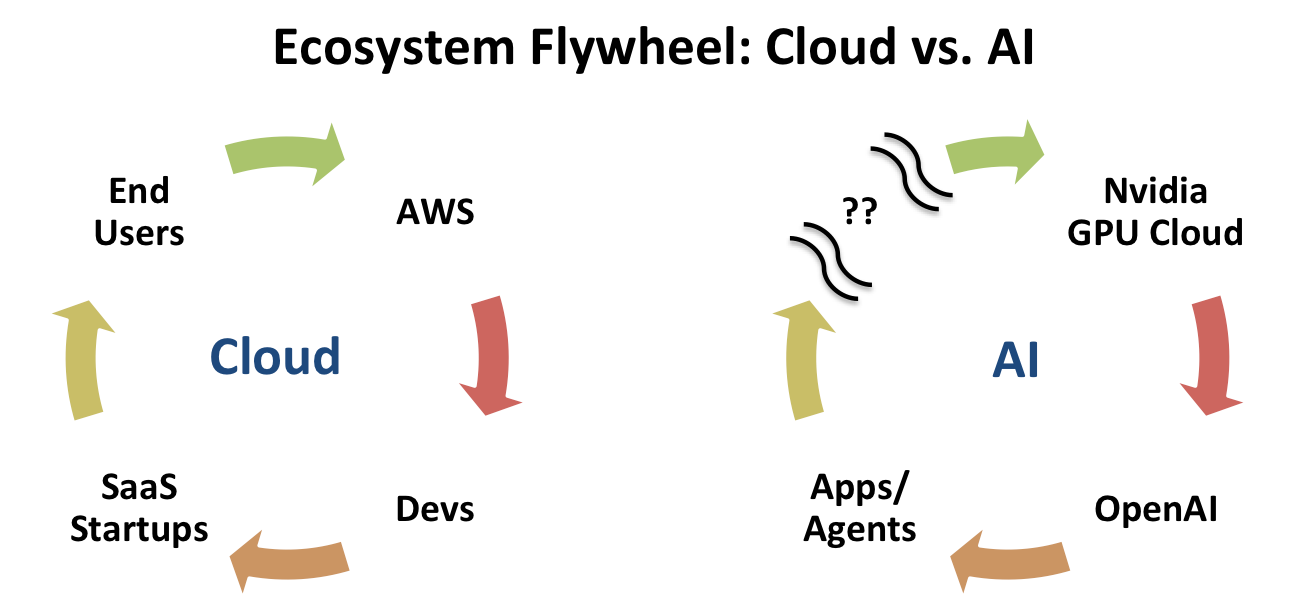

In the Cloud era, the ecosystem flywheel spun smoothly. AWS provided infrastructure that empowered developers, who built SaaS startups, which delivered value to end users and their growth fed back into AWS. It was a positive-sum loop.

In contrast, the AI ecosystem is still finding its flywheel. Nvidia powers foundational model providers like OpenAI, which enable a wave of API-based applications. But for the flywheel to gain momentum, the economics need to extend meaningfully to the broader ecosystem, something that’s still evolving.

Without a clear path to downstream value creation, the AI loop risks centralizing power and stalling broader adoption / innovation.

Conclusion

AI is being structured like Cloud/SaaS at the abstraction level (orchestration, tools, platforms). But the underlying cost structures, control over data/models and power dynamics of large foundational model companies wanting vertical integration makes this fundamentally different and non-rhyming from Cloud in key areas.

This may lead to different outcomes for different players in the ecosystem. It may even hinder the emergence of a broad ecosystem that Cloud/SaaS fostered, unless inference costs, GPU prices, access to data and models are addressed.